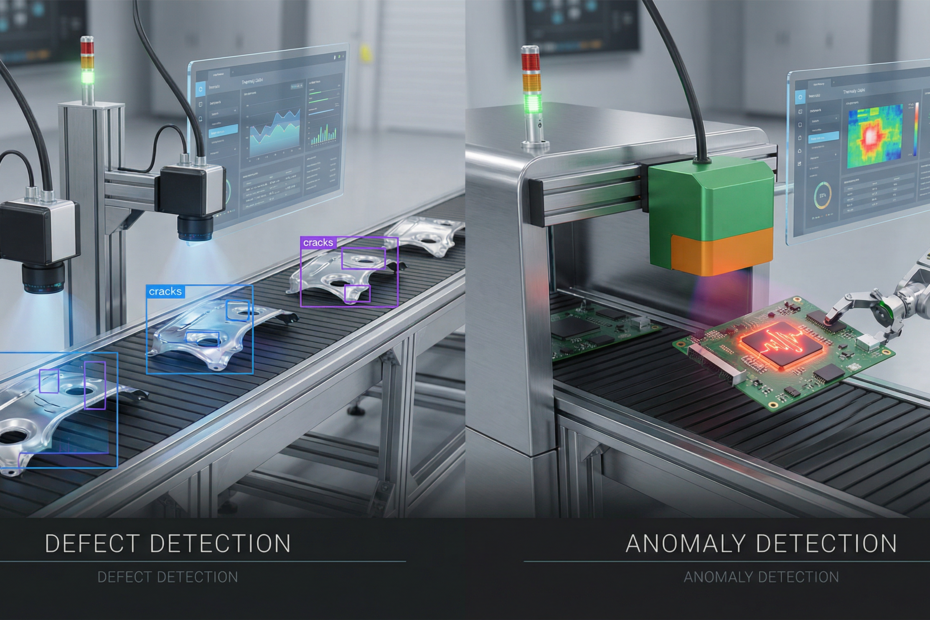

Factory quality teams evaluating vision AI often hear two terms: defect detection and anomaly detection. They sound similar, but they solve different problems and require different data, models, and deployment strategies. Choosing the wrong approach wastes time and money. Here’s the practical difference and when to use each — or both.

Defect Detection: You Know What You’re Looking For

Defect detection is supervised learning. You have labeled examples of known defect types — scratches, dents, cracks, discoloration — and you train a model to recognize them. The model learns: “this is a scratch, this is a dent, this is good.” At inference, it classifies each part or region into one of your predefined categories.

Data requirements are straightforward: you need hundreds to thousands of labeled images per defect type. Labels can be bounding boxes, segmentation masks, or image-level tags depending on whether you need localization. The more defect types you care about, the more labeled data you need. Rare defects are the hardest: if a defect appears in 0.1% of parts, you may need to oversample or augment to give the model enough examples.

Defect detection excels when you have clear, repeatable failure modes. Welding defects, surface defects on machined parts, assembly errors — if you can name and photograph them, supervised detection is the right tool. It gives you high precision and recall for known defects, and you can tune the model per defect type.

Anomaly Detection: You Don’t Know All Failure Modes

Anomaly detection is unsupervised or semi-supervised. You train on “normal” images only — no defect labels. The model learns what “good” looks like and flags anything that deviates. Anomaly detection answers: “is this part normal or not?” It does not answer: “what kind of defect is this?”

Data requirements are different: you need many images of good parts, ideally from production with natural variation. You do not need defect examples. That makes anomaly detection attractive when defects are rare, novel, or too varied to label. Think: new defect types that appear when a supplier changes, process drift, or “never seen before” failures that would be expensive or impossible to collect in labeled form.

Anomaly detection is weaker at explaining what’s wrong. It tells you “something is off” but not “it’s a scratch on the left edge.” For some workflows that’s enough — you flag for human review. For others, you need the defect type to route to rework or scrap.

When to Use Which Approach

Use Defect Detection When

- You have defined defect categories and can collect labeled examples

- You need to classify defect type for downstream actions (rework vs scrap, defect-specific repair)

- Defect rates are high enough that collecting 500+ examples per type is feasible

- Your failure modes are relatively stable — no constant stream of novel defects

Use Anomaly Detection When

- Defects are rare or novel — you can’t collect enough labeled examples

- You’re okay with “flag for review” rather than “classify defect type”

- You want a first line of defense against unknown failure modes

- You’re in a new line or product where defect taxonomy is still evolving

Combine Both When

Many factories use both. Run anomaly detection as a catch-all for “anything weird,” then run defect detection on flagged parts to classify known types. Anomaly detection catches novel defects; defect detection handles the bulk of known failures with higher precision. This hybrid approach reduces escape rate while keeping false positives manageable. For more on vision strategies, see our computer vision quality inspection pillar.

Data Requirements: A Quick Comparison

Defect detection: Labeled images for each defect type. Plan for 500–2000+ per class depending on complexity. Include variation in severity, orientation, and lighting. Rare defects need oversampling or synthetic augmentation.

Anomaly detection: Unlabeled images of good parts only. Thousands of normal examples with production variation. The model learns the distribution of “good”; anything outside that distribution is anomalous. No defect labels required.

Common Mistakes

Using anomaly detection when you have clear defect categories: If you already know your defect types and can label them, supervised defect detection will outperform anomaly detection for those classes. Anomaly detection adds value as a safety net, not as a replacement when labels exist.

Using defect detection when defects are novel or rare: If you can’t collect enough examples of a defect type, the model will underperform or miss it entirely. Anomaly detection can catch “not normal” even when you’ve never seen that exact defect before.

Ignoring false positive rate: In factories, false positives cost time — every false alarm triggers a human review. Anomaly detection tends to have higher false positive rates than well-trained defect detection. Tune thresholds and consider the cost of false alarms vs escapes.

How to Evaluate: Precision, Recall, and False Positive Rate

In a factory context, metrics matter differently than in generic ML:

- Precision (of your “defect” or “anomaly” predictions): What fraction of flagged parts are actually defective? Low precision means operators waste time on false alarms.

- Recall (sensitivity): What fraction of real defects do you catch? Low recall means defects escape to customers.

- False positive rate: How many good parts do you incorrectly flag? This drives operator fatigue and trust. If 50% of flags are false positives, operators start ignoring the system.

Balance these against your costs. A high-value product may tolerate more false positives to maximize recall. A high-volume line may need higher precision to avoid bottlenecking review. Define your target metrics before deployment and monitor them over time.

Next Steps

Start by mapping your defect taxonomy: what do you know, what can you label, and what’s truly unknown? If you have clear categories and enough examples, defect detection is your primary tool. If you have novel or rare defects, add anomaly detection as a complement. Many teams begin with defect detection for their top 3–5 defect types and layer in anomaly detection to catch the rest.

Kamna Ventures helps factory teams choose and deploy the right vision approach. We assess defect types, data availability, and workflow requirements before recommending a strategy. Explore our AI Incubation Lab and our computer vision quality inspection capabilities to get started.