Industrial vision AI pilots fail more often than they should. The technology works — but deployment details make or break results. Manufacturing quality engineers and ops leaders considering vision AI need to know the pitfalls upfront. The failures are rarely about the algorithm; they’re about lighting, hardware, data, and problem definition. Here are the top reasons pilots fail and practical ways to de-risk each one.

1. Bad Lighting

The pitfall: Inconsistent lighting, shadows, reflections, and glare produce images that vary from part to part and shift over the day. Models trained on “good” images fail when lighting changes. A pilot that works in the lab often fails on the line.

Mitigation: Design lighting before you train. Use directional, controlled illumination that highlights defects and suppresses ambient interference. Avoid mixed light sources (e.g., daylight plus fluorescents). Document your lighting setup and replicate it in training and production. Consider lighting that’s invariant to small part position changes. Ring lights, dome lights, and structured lighting each have trade-offs — match the geometry to your part and defect type. For more on vision best practices, see our computer vision quality inspection pillar.

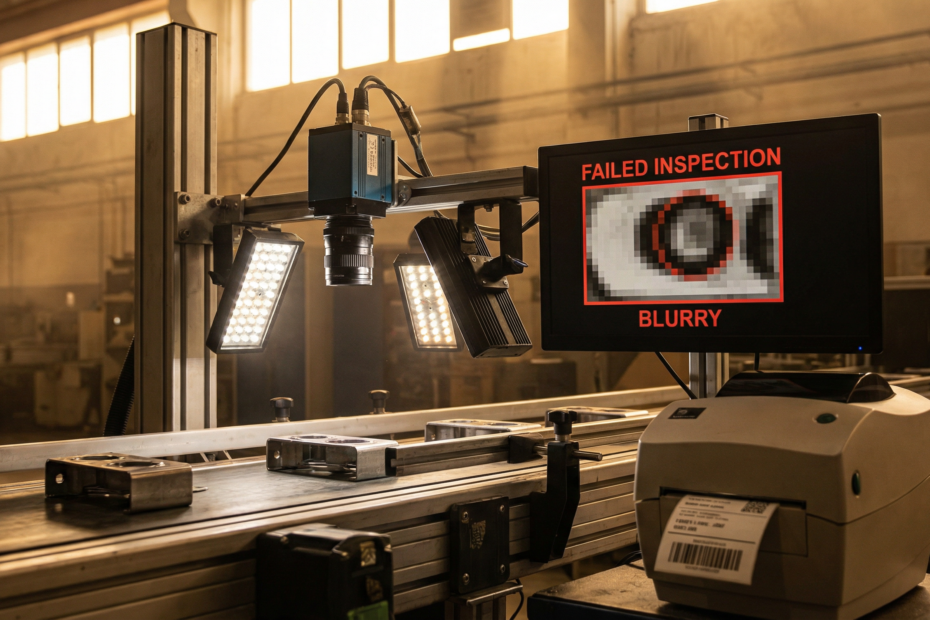

2. Wrong Camera or Lens Selection

The pitfall: Resolution too low to see the defects you care about. Frame rate too slow for line speed. Wrong field of view — you’re capturing too much or too little. Lens distortion or focus issues that weren’t accounted for in training.

Mitigation: Spec the camera and lens for your defect size, part speed, and inspection zone. Calculate pixel-per-defect requirements: if your smallest defect is 0.5mm and you need 10 pixels across it, work backward from sensor size and working distance. Test with production parts before locking in hardware. Don’t assume “higher resolution is always better” — it adds cost and compute without benefit if you’re already above the needed density. For moving parts, ensure your shutter speed and frame rate eliminate motion blur.

3. Insufficient or Noisy Labels

The pitfall: Too few examples of defects, especially rare ones. Labels that are inconsistent (different annotators, vague guidelines). Labels that don’t match production reality — e.g., defects labeled in lab conditions that look different on the line. The model learns noise, not signal.

Mitigation: Define a labeling protocol: what counts as a defect, how to draw bounding boxes or masks, how to handle edge cases. Use multiple annotators with cross-checks for consistency. Aim for diversity — different defect severities, part orientations, and lighting conditions. Plan for 500–2000+ labeled images per defect type depending on complexity. Validate labels against production samples before training. Rare defects matter: if you see a defect type only 1% of the time, you need enough examples that the model can learn it. Consider oversampling or synthetic augmentation for rare classes.

4. Solving the Wrong Problem

The pitfall: Detection, classification, and segmentation are different tasks. Using detection when you need pixel-level segmentation. Using classification when you need to localize defects. Picking an approach that doesn’t match the downstream action (e.g., “is there a defect?” vs “where exactly is it?”).

Mitigation: Define the output you need. If rework requires defect location, you need localization or segmentation. If you only need pass/fail, classification may suffice. If you need defect type and count, detection with classification is appropriate. Match the model architecture and labeling strategy to the use case. Our computer vision quality inspection page covers defect detection vs anomaly detection in more depth.

5. Overfitting to Lab Conditions

The pitfall: Training data collected in a controlled lab. Production has different parts, lighting, backgrounds, and variability. The model performs well in validation but fails in the real environment.

Mitigation: Collect training data from production or production-like conditions. If that’s not possible, use data augmentation (rotation, lighting variation, background changes) to simulate production diversity. Validate on held-out production data before deployment. Run a pilot on the line with a small batch before full rollout.

6. No Plan for Edge Cases

The pitfall: The model handles “normal” defects but fails on novel defect types, new part variants, or unusual conditions. No fallback when the model is uncertain. No process for retraining when the line changes.

Mitigation: Define edge cases upfront: new defect types, part variants, and failure modes. Include “unknown” or “uncertain” as valid outputs — route those to human review instead of forcing a pass/fail. Plan a retraining cadence when new defect types appear or process changes. Monitor performance over time and trigger retraining when accuracy drifts.

De-Risk Your Pilot

Vision AI can deliver real ROI — reduced escape rate, less scrap, scalable inspection — but only when deployment is done right. Address lighting, camera selection, and labeling before you train. Match the problem type to the right approach. Validate on production data. Plan for edge cases and ongoing maintenance.

The best pilots start with a clear success metric (e.g., defect escape rate, false positive rate) and a defined scope. Don’t try to solve every defect type in phase one. Pick the highest-impact defect, nail it, then expand. That approach de-risks both technically and organizationally.

Kamna Ventures helps manufacturers de-risk vision AI pilots from day one. We specify hardware, design labeling strategies, and validate models before deployment. Ready to move forward? Explore our AI Incubation Lab and our computer vision quality inspection capabilities.